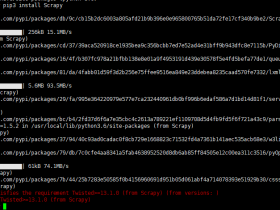

一、准备:

1、版本:python3

2、爬取目标地址: http://www.sina.com.cn/

二、查看源代码,分析:

1、整个分类 在 div main-nav 里边包含

2、分组情况:1,4一组 、 2,3一组 、 5 一组 、6一组

三、实现的python爬虫代码:

# coding=utf-8

import urllib.request

import ssl

from lxml import etree

# 获取html内容

def getHtml(url):

page = urllib.request.urlopen(url)

html = page.read()

html = html.decode('utf-8')

return html

# 获取内容

def get_title(arr, html, pathrole, sumtimes):

selector = etree.HTML(html)

content = selector.xpath(pathrole)

i = 0

while i <= sumtimes:

result = content[i].xpath('string(.)').strip()

arr.append(result)

i += 1

return arr

# 创建ssl证书

ssl._create_default_https_context = ssl._create_unverified_context

url = "http://www.sina.com.cn/"

html = getHtml(url)

# 第一次获取

arr = []

pathrole1 = '//div[@class="main-nav"]/div[@class="nav-mod-1 nav-w"]/ul/li'

retult1 = get_title(arr, html, pathrole=pathrole1, sumtimes=23)

# 第二次获取

if retult1:

pathrole2 = '//div[@class="main-nav"]/div[@class="nav-mod-1"]/ul/li'

retult2 = get_title(retult1, html, pathrole=pathrole2, sumtimes=23)

else:

print("error")

# 第三次获取

if retult2:

pathrole3 = '//div[@class="main-nav"]/div[@class="nav-mod-1 nav-mod-s"]/ul/li'

retult3 = get_title(retult2, html, pathrole3, sumtimes=11)

else:

print("error")

# 第四次获取

if retult3:

pathrole4 = '//div[@class="main-nav"]/div[@class="nav-mod-1 nav-w nav-hasmore"]/ul/li'

retult4 = get_title(retult3, html, pathrole4, sumtimes=1)

else:

print("error")

# 第五次获取:更多列表

if retult4:

pathrole5 = '//div[@class="main-nav"]/div[@class="nav-mod-1 nav-w nav-hasmore"]/ul/li/ul[@class="more-list"]/li'

retult5 = get_title(retult4, html, pathrole5, sumtimes=6)

print(retult5)

else:

print("error")

总结:

其实,以上代码,还可以继续优化,比如 xpath 的模糊匹配。可以把前四组合为一个,后面就留给大家继续去学习去操作吧!

![urllib.error.URLError: urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed (_ssl.c:777) 解决办法](https://www.fujieace.com/wp-content/themes/fujie/timthumb.php?src=https://www.fujieace.com/wp-content/uploads/2018/04/001-7.png&w=280&h=210&zc=1)